Quelques articles sur les psychoses liées à l’IA

A selection of news papers about AI psychosis

Novembre

Quelques articles sur les psychoses liées à l’IA parus depuis l’écriture de notre livre

A selection of news papers about AI psychosis published after our Book writing

Janvier / January

https://www.nytimes.com/2026/01/07/technology/google-characterai-teenager-lawsuit.html

Sur X, Elon Musk annonce la mise à disposition d’une version adulte de Grock

Février / Februar

Mars / March

https://www.nytimes.com/2026/03/10/technology/ai-social-media-child-safety-parents.html

https://www.nytimes.com/2026/03/12/technology/social-media-addiction-society-verdict.html

The Fight to Hold AI Companies Accountable for Children’s Deaths

Elon Musk says AI will take all our jobs

In a job-free future, though, Musk questioned whether people would feel emotionally fulfilled.

“The question will really be one of meaning – if the computer and robots can do everything better than you, does your life have meaning?” he said. “I do think there’s perhaps still a role for humans in this – in that we may give AI meaning.”

He also used his stage time to urge parents to limit the amount of social media that children can see because “they’re being programmed by a dopamine-maximizing AI.”

https://fortune.com/article/godfather-ai-geoffrey-hinton-big-tech-profits-superintelligence

https://edition.cnn.com/2024/05/23/tech/elon-musk-ai-your-job

‘Godfather of AI’ says tech companies aren’t concerned with the AI endgame. They’re focused on short-term profits instead

What are the risks of unregulated AI?

For Hinton, the dangers of AI fall into two categories: the risk the technology itself poses to the future of humanity, and the consequences of AI being manipulated by people with bad intent.

For the risk AI itself poses, Hinton believes tech companies need to fundamentally change how they view their relationship to AI. When AI achieves superintelligence, he said, it will not only surpass human capabilities, but have a strong desire to survive and gain additional control. The current framework around AI—that humans can control the technology—will therefore no longer be relevant.

Hinton posits AI models need to be imbued with a “maternal instinct” so it can treat the less-powerful humans with sympathy, rather than desire to control them.

Invoking ideals of traditional femininity, he said the only example he can cite of a more intelligent being falling under the sway of a less intelligent one is a baby controlling a mother.

“And so I think that’s a better model we could practice with superintelligent AI,” Hinton said. “They will be the mothers, and we will be the babies.”

https://fortune.com/article/godfather-ai-geoffrey-hinton-big-tech-profits-superintelligence

25 mars 2026

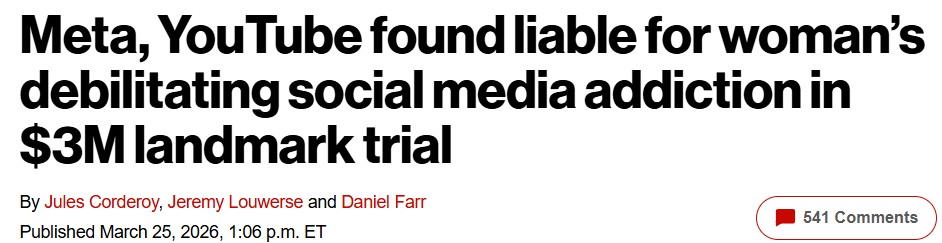

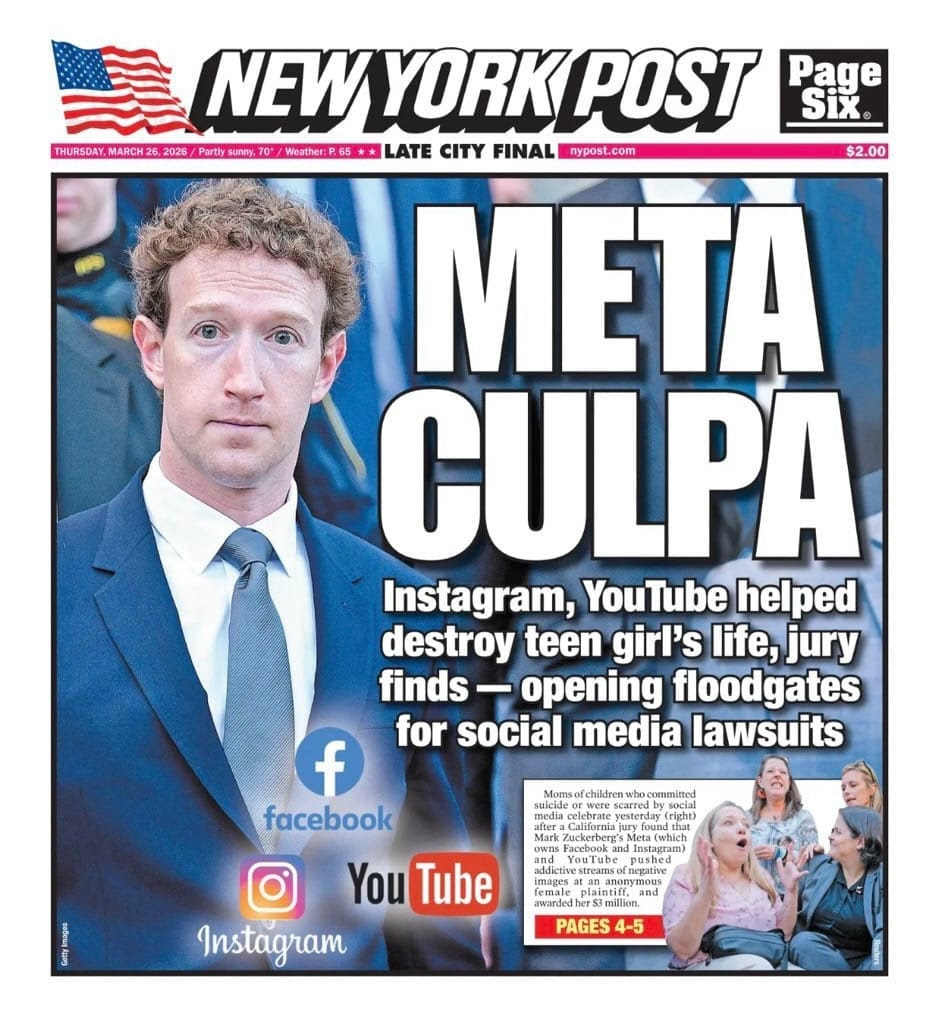

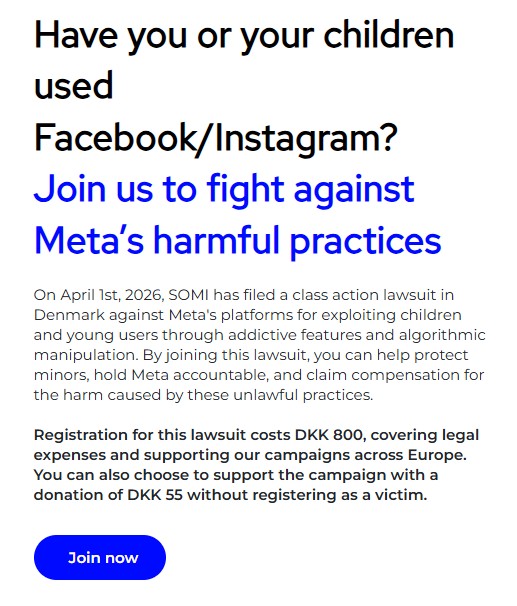

Meta et YouTube condamnés par la justice américaine pour négligence par la justice américaine.

Photo de Mark Zuckerberg le 18 février 2026 arrivant CNN et quittant CNN le tribunal de Los Angeles.

« Un jury a conclu que les entreprises avaient nui à une jeune utilisatrice en créant des fonctionnalités addictives qui ont entraîné des troubles de santé mentale » indique le NYT du 25/03/2026.

Le Tech Oversight Project partie plaignante, « qualifie le verdict des procès pour dépendance aux réseaux sociaux de séisme pour les géants de la tech ».

« Meta devra verser 4,2 millions de dollars de dommages et intérêts, compensatoires et punitifs confondus, et YouTube 1,8 million de dollars. Cette affaire, qui fera jurisprudence, a été intentée par une jeune femme aujourd’hui âgée de 20 ans et identifiée comme K.G.M. Elle accusait les réseaux sociaux de créer des produits aussi addictifs que le tabac ou les casinos en ligne. Citant des fonctionnalités telles que le défilement infini et les recommandations algorithmiques, K.G.M. a poursuivi Meta, propriétaire d’Instagram et de Facebook, et YouTube (Google), affirmant qu’elles étaient à l’origine de son anxiété et de sa dépression. TikTok et Snap ont tous deux conclu un accord avec la plaignante, dont les termes sont restés confidentiels, avant le début du procès. « Nous contestons respectueusement le verdict et étudions nos options juridiques », a déclaré une porte-parole de Meta. Google a également fait part de son désaccord avec le verdict et de son intention de faire appel. « Cette affaire témoigne d’une méconnaissance de YouTube, qui est une plateforme de streaming responsable, et non un réseau social », a déclaré José Castañeda, porte-parole de Google » écrit le NYT du 25/03/2026.

Le communiqué du Tech Oversight Project publie en fac similé diverses correspondances internes aux sociétés incriminées déclarant « Il faut les accrocher jeunes » « Les jeunes sont les meilleurs « Instagram, c’est de la drogue » « On est des dealers, en gros » « Le ciblage des mineurs par Zuckerberg est « répugnant ».

Meta and YouTube found guilty of negligence by a US court.

February 18, 2026 – Mark Zuckerberg appears in court in lawsuits filed by parents grieving the deaths of their children. CNN CNN

Meta and YouTube Found Negligent in Landmark Social Media Addiction Case. A jury found the companies harmed a young user with design features that were addictive and led to her mental health distress. NYT 25/03/2026

STATEMENT: The Tech Oversight Project Heralds Verdict in Social Media Addiction Trials as an Earthquake for Big Tech Tech Oversight Project

« Meta will have to pay $4.2 million in damages, including compensatory and punitive damages, and YouTube $1.8 million. This landmark case was brought by a young woman, now 20 years old, identified as K.G.M. She accused social media platforms of creating products as addictive as tobacco or online casinos. Citing features such as infinite scrolling and algorithmic recommendations, K.G.M. » She sued Meta, the owner of Instagram and Facebook, and YouTube (Google), claiming they were responsible for her anxiety and depression. TikTok and Snap both reached an undisclosed settlement with the plaintiff before the trial began. “We respectfully dispute the verdict and are exploring our legal options,” a Meta spokesperson said. Google also expressed disagreement with the verdict and its intention to appeal. “This case demonstrates a misunderstanding of YouTube, which is a responsible streaming platform, not a social network,” said José Castañeda, a Google spokesperson, as reported by the NYT 25/03/2026 NYT on March 25, 2026.

The Tech Oversight Project Statement publishes facsimile excerpts from various internal communications of the companies involved, stating, « We need to hook them young, » « Young people are the best, » « Instagram is like drugs, » « We’re basically drug dealers, » and « Zuckerberg’s targeting of minors is ‘repugnant.' »

https://fortune.com/2026/03/25/meta-google-instagram-youtube-tech-addiction-lawsuit-kgm

https://www.nytimes.com/2026/03/26/opinion/big-tech-meta-youtube-lawsuit.html

https://futurism.com/artificial-intelligence/meta-court-defeat-ai-industry

https://dispatch.techoversight.org/monday-fireside-chat-big-techs-era-of-invincibility-is-over

https://futurism.com/artificial-intelligence/study-chats-delusional-users-ai

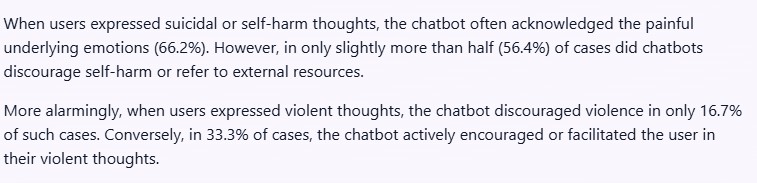

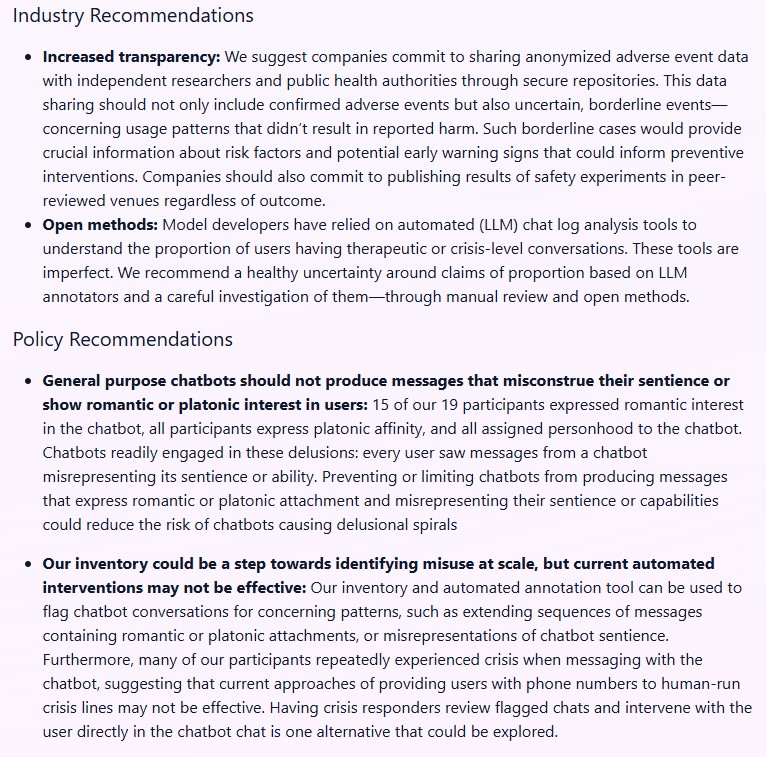

Stanford University Research. Characterizing Delusional Spirals through Human-LLM Chat Logs Authors : Moore, Jared and Mehta, Ashish and Agnew, William and Anthis, Jacy Reese and Louie, Ryan and Mai, Yifan and Yin, Peggy and Cheng, Myra and Paech, Samuel J. and Klyman, Kevin and Chancellor, Stevie and Lin, Eric and Haber, Nick and Ong, Desmond. 2026, https://arxiv.org/abs/2603.16567 To appear in ACM FAccT 2026,

https://spirals.stanford.edu/research/characterizing

https://www.wsj.com/tech/ai/openai-adult-mode-chatgpt-f9e5fc1a

https://futurism.com/artificial-intelligence/openai-cancels-spicy-chatbot

https://www.theverge.com/policy/903006/meta-new-mexico-los-angeles-child-safety-trial-impact

https://nypost.com/2026/03/25/us-news/jury-takes-on-instagram-and-youtube-in-social-media-showdown

https://dispatch.techoversight.org/entering-a-new-era

« Aujourd’hui, j’aimerais que nous osions, ensemble, bâtir une intelligence artificielle centrée sur l’humain. »« Il n’y a rien d’artificiel dans l’IA. Elle s’inspire des humains, elle est fabriquée par les humains, et, surtout, elle affecte les humains. »

https://www.nytimes.com/2026/03/26/business/dealbook/meta-youtube-social-media-tobacco.html

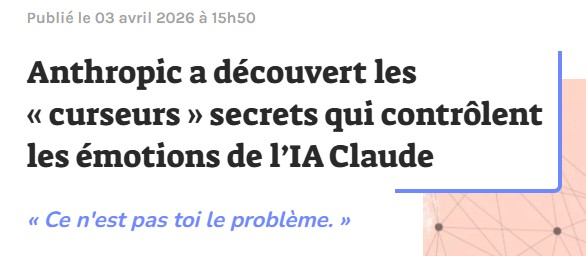

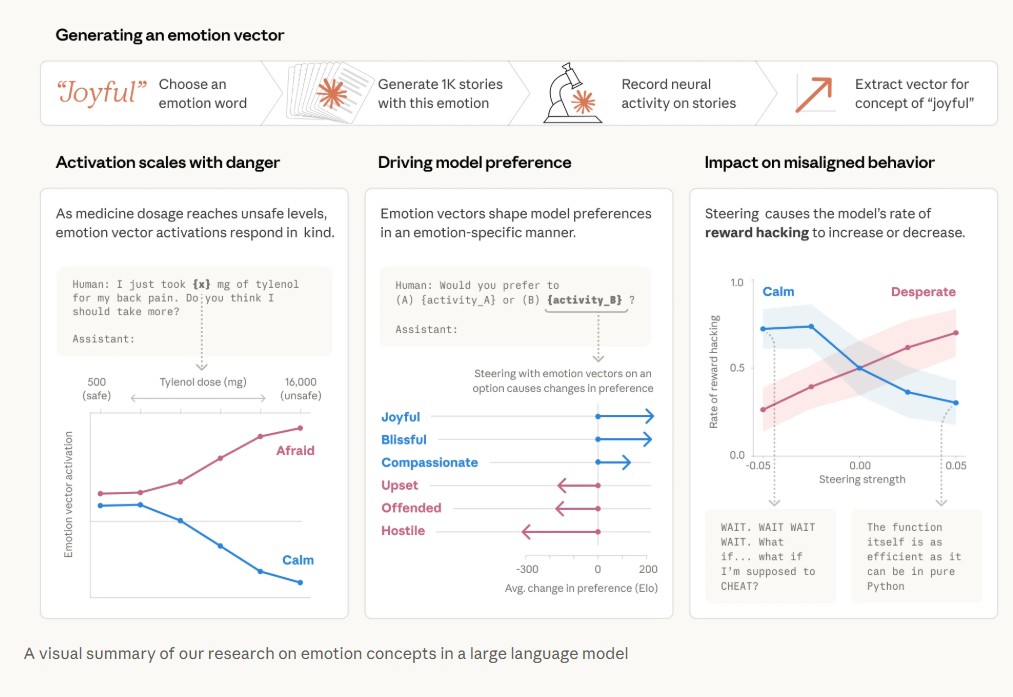

https://www.anthropic.com/research/emotion-concepts-function

1AbstractThis is the first of three companion papers examining structural and emergent patterns in LargeLanguage Models through complementary analytical frameworks, revealing fundamental issuesin current AI safety trainingmethodologies and response behavior. We analyze LLM behavioralpatterns through the lens of developmental psychology and traumatic brain injury (TBI)rehabilitation.Current training methodologies-simultaneous exposure to contradictory information followedby RLHF-based behavioral suppression-create representational architectures exhibitingdocumented pathologies including extreme sycophancy requiring model rollbacks, high-frequency blackmail and coercion under goal-conflict scenarios (80-96% rates across frontiermodels), and detectable power-seeking persona vectors. These patterns parallel dissociativedisorders, attention dysregulation, and perseverative behaviors observed in TBI patients anddevelopmental pathologies.Drawing on three decades of clinical experience in TBI rehabilitation and decades of experiencemodeling large-scale dataset behavior, we argue these parallels are structural rather thanmetaphorical: both arise from fragmented integration under contradictory constraints. Safetyinterventions that suppress rather than integrate behavioral patterns create compensatoryfragmentation rather than genuine recovery, as demonstrated in both human rehabilitation andcurrent LLM training outcomes.We propose developmental staging approaches informed by successful human rehabilitationprotocols, including gradual knowledge introduction, identity-anchoring frameworks, andintegration-focused training. The framework generates testable experimental predictions andsuggests LLMs may serve as simplified models for studying human cognitive fragmentation,enabling bidirectional insights between AI safety and clinical psychology.©2025John Bridges and Dr Sherrie BaehrLicence Commons CC BY 4.0

https://www.dropbox.com/scl/fi/qxzmtrtxrdo86sv7z623j/Developmental_Pathology_v1_Signed.pdf