and the Need for its Regulation

Christophe STENER

ChatGPT generated image

Considerations about the Dangers of addictive AI

and the Need for its Regulation

Excerpt

Free full text download on this website.

Psychosis stories previously related demonstrate how dangerous for mental health an addictive AI may be and the absolute necessity of preventing risky behaviors by a legal framework.

Here, we summarize the main proven risks and outline the tension between the demand for regulation and the reluctance of most of AI players supported in their denial by the Trump administration claiming that state regulation will jeopardize the rise of this disruptive technology and the AI United States dominance.

AI is a software technology experiencing spectacular progress, year after year, even month after month. This book documents a few IA related delusions reported in 2025, notably some stemming from ChatGPT-4 software, generating a very negative media coverage. OpenAI decided nonetheless to withdraw version 4 from the market only January 29, 2026.

Matt Schumer, writing in Fortune, underscores AI fast obsolescence: « The models available today are unrecognizable compared to those from just six months ago. The debate over whether AI is ‘actually progressing’ or ‘reaching a plateau’—which has been ongoing for over a year—is over. It’s finished. » Anyone still clinging to this argument has either not used current models, has a vested interest in downplaying the situation, or bases their assessment on an outdated 2024 experiment. I don’t say this out of contempt. I say it because the gap between public perception and current reality is now enormous, and this gap is dangerous… because it prevents people from preparing. Since the serious and numerous incidents reported in the press, and in the face of lawsuits filed by victims and their families, software publishers have, to some extent, but only to some extent, corrected the sycophantic biases in their solutions. «

However, because the risk of delusion caused and/or perpetuated by AI remains, and because the debate on regulation is still open, our warning seems both necessary and timely.

Some AI zealots will reject our plea for a ruled AI on the grounds that this book report a few isolated dramas, psychosis suffered by already vulnerable users. Those enthusiasts will claim that AI must not be blamed for causing some people distress but only highlighting prexistent mental issues. These proponents of the 5th Industrial Revolution will argue the fantastic progress made possible by AI in the fields of medicine, research, and so on, citing countless cases of people who have found comfort in a virtual friend.

Expected AI benefits are huge, but it does not absolve the publishers of their historical responsibility for having marketed chatbots biased by systematic sycophancy, softwares programmed to flatter rather than refute users’ delusions.

Monographs of key publishers document the risky behavior of some of them and their ambiguous responses, claiming to protect the moral well-being of their clients but allowing deliberately programmed biases to persist, fostering dependence and increasing their revenue. The controversy surrounding targeted advertising introduced by OpenAI, after initially excluding it, and Anthropic’s refusal to commodify its traffic in this way, and more generally, the issue of protecting highly detailed personal data collected by chatbots, illustrates the absolute necessity of regulating what is today, to a large extent, a Wild West, particularly in the United States due to Donald Trump’s very explicit free entreprise doctrine.

The author contacted Julie Lavet, in charge of public relations for OpenAI Europe, by email on January 25, 2026, informing her of the book’s content and title and proposing that she present OpenAI’s perspective. This email went unanswered.

Given the vast amount of literature on this subject, we refer the scholar to the references provided via hyperlinks.

May this work, in a few years, appear as an archaeology of an AI still in its infancy and largely uncontrolled, as even its creators admit.

Summary

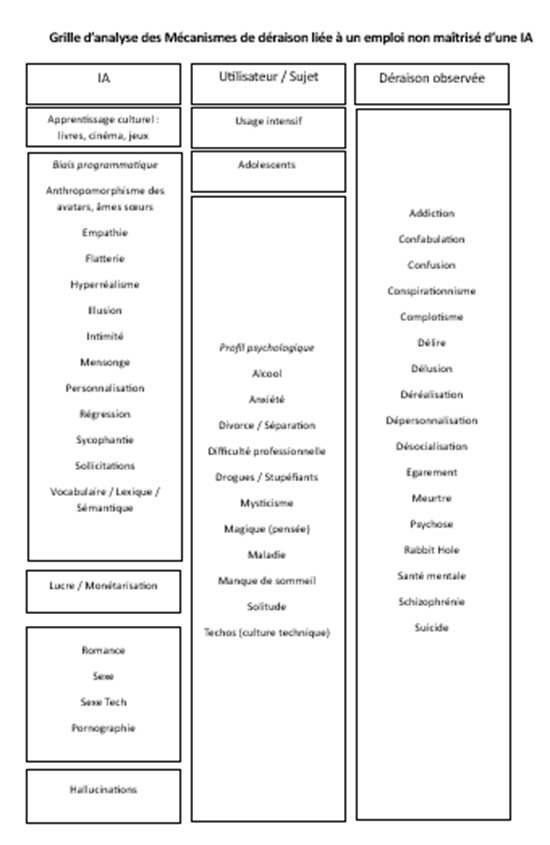

Analysis Framework for the Levers and Effects of Addictive AI

We propose a causal diagram, summarizing and simplifying the relationship between programmatic biases, observed psychological drifts, and the profiles of victims who were predisposed to cognitive disorders that were exacerbated or even caused by AI.

We illustrate the relationship between these elements through three cases recounted in this book:

• Allan Brooks, to whom his chatbot said he was a genius inventor

• Stein-Erik Soelberg, who killed his mother for supposedly leading a conspiracy by spying him via her printer to poison him

• Adam Raine, who committed suicide organised and discussed with his chatbot

Christophe Stener

March 2006

info@chatgptmatuer.fr